“I give [this watch] to you not that you may remember time, but that you might forget it now and then for a moment and not spend all your breath trying to conquer it. Because no battle is ever won he said. They are not even fought. The field only reveals to man his own folly and despair, and victory is an illusion of philosophers and fools.” – William Faulkner, The Sound and the Fury

Living and writing at a time where the legends of the Civil War and the fables of the Reconstruction were still part of living memory, William Faulkner’s work wrestled with the ideas of history, memory, mythology and heritage, and the challenges of one’s immediate existence amid such a monumental backdrop.

The notion of contending with one’s history extends far beyond the literary world, and takes a place of eminence within the world of enterprise system implementation. Almost without exception, I find myself with customers at the onset of Epicor implementation projects working through options regarding how best to address the management of the historical transactional data from their legacy system. Customers find themselves in a paradoxical situation: customers want to move forward with a new system that can meet their upcoming strategic goals and initiatives, but they also want to be able to reference the rich history that was built up as part of their legacy system. It is as if they wish to rip all the lathe, plasterwork, and wainscoting off their old home and slap it onto the walls of their new dwelling. But as any carpenter would attest, the fit of old materials is never perfect. Often, is it downright shoddy.

Given the challenges of layering the old with the new, I often work with customers to help them understand the perils and promises of their data desires. I try to find a way to satisfy the company’s history without compromising the new installation in question. The fundamental challenge has to do with whether a specific company can bring over historical transactional data from their legacy system. Transactional data may include Quotes, Sales Orders, Purchase Orders, Work Orders, Shipments, Receipts, Invoices, etc. In going over the options, there are normally three ways in which a company can reference its historical data as part of a new Epicor implementation:

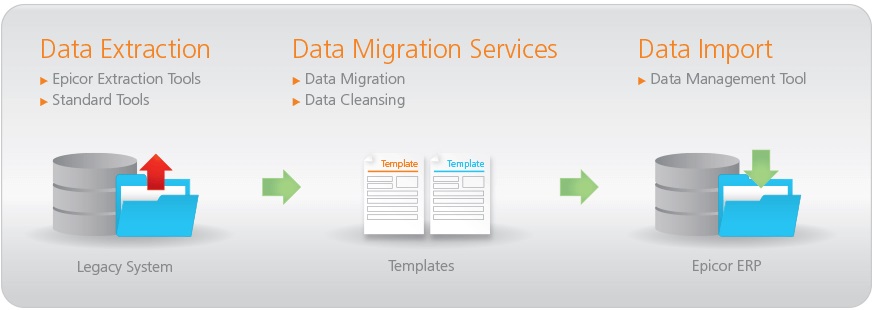

- The transactional data can be converted using Epicor’s Data Management Tool (DMT). That is, the legacy data can be manipulated to fit into a format conducive to the setup of the Epicor environment and loaded into the database, as if it were a live transaction load.

- The transactional data can be loaded into Epicor user-defined (UD) tables and references from within the application using BAQs and Dashboards.

- The legacy database can be connected to the Epicor application using external data source configurations, and then to queries using external BAQs.

The primary concern has to do with addressing the desire to actually convert legacy transactional data into Epicor’s database without compromising the historicity of the data itself, and without gumming up the system with a lot of noise. It’s hard enough to correctly convert live records, much less to convert the mountains of ancient data. From my own experience and from the experiences of my coworkers, we do not consider it a recommended best practice to convert historical transactional data into Epicor’s standard table structure when implementing Epicor ERP. The following reasons underlay this recommendation:

- When implementing or reimplementing, it is often the case that the base setup data values change, in terms of their naming conventions, quantity, values, etc. This can make the transformation of legacy data labor-intensive and error-prone.

- ERP data is by its nature integrated. Loading transactional records can trigger unexpected effects. For instance, should an Epicor customer elect to import the Purchase Orders from the legacy environment, the system would plan for these POs to be received and alter system planning accordingly. To avoid this, these records would need to be closed, but the data in question would therefore fail to reflect the original legacy data, in which Purchase Orders were closed through the receiving/invoicing process at a much earlier date.

- To load transactional data in the form in which it exists in the legacy environment, the entire collection of related records needs to be loaded. For instance, if a customer wished to have purchase order history, to have representative data in the Epicor environment, the related PO Receipt and AP Invoice records would also need to be included. This is, among other things, a tremendous amount of work.

- In some cases, Epicor’s business logic updates fields and does not allow these to be user-updated. For instance, Sales Order or Purchase Order lines are system-set and not user-modifiable. This makes it difficult to load historical data that accurately reflects the legacy data: what was PO Line 2 in the legacy system may get converted to PO Line 1, as it was the first line to be loaded.

- Transactions have general ledger implications. Loading transactions can thus have unexpected consequences that affect WIP, inventory, and their related GL accounts. For instance, should the user import Purchase Order Receipt records, the transaction date would not by default reflect the actual date in which the transaction occurred. Should the customer wish to date the transactions into the past, the necessary fiscal periods would need to be created. If the load does not include all transactions, the General Ledger would be inaccurate, and would require effort to reconcile and correct.

An alternative to performing a DMT table load into the standard tables is the utilization of Epicor UD tables to store this data. This avoids many of the perils above, but requires the implementing customer to construct querying tools (BAQs and Dashboards) to retrieve and present this information. Moreover, I’ve found that the actual usage of historical data tends to be less than anticipated. In many cases, we have found that the actual usage of this data tends to decrease significantly shortly after cutover. I recently had one customer go to great lengths to convert data to UD tables and construct the necessary querying tools to view the data—only to forget almost entirely about these capabilities amidst the booming buzzing confusion of cutover. By the time they had settled down, they already had built enough living history into their database to move forward, and the UD tables were all but forgotten.

A final option is to access the legacy database using external data source connections and query it using external BAQs. This option is normally the simplest of the three, as it saves the time of initially converting the data, though it similarly requires the construction of the necessary querying tools to receive and present the data. Depending on the age and format of the legacy database, the external data source option might not be feasible. As part of some implementations, I have seen customers convert their legacy databases into SQL format at cutover, for the express purpose of querying their ad hoc history via external BAQs. In other cases, I’ve seen customers fall back to the UD table option when other options were seen as too labor-intensive.

As such, given the other options, I recommend that the conversion of data at cutover be limited to master file and open transactions, and utilize external data source connections to the legacy database, and/or UD table conversion, in order to gain access to historical legacy data.

When it comes to the art of crafting prose, William Faulkner forgot more than most of us remember. Elsewhere in his works, Faulkner wrote that “[t]he past is not dead. It’s not even past.” In a similar vein, the attempt to bring old historical data into a new ERP system is itself an act of rewriting history. And in doing so, one sacrifices accuracy for availability. Also, when implementing a new system, a company quickly learns that the high cost of retaining history is something they would soon like to forget. As a company builds a new history in its new system, the old history becomes less and less valuable, with such surprising rapidity that the old soon takes its place at the back of the database, taking up space and gathering data dust, like a childhood diary left on a top shelf and forgotten.